Where Tech Meets Bio #1: Convergence of technologies

Intro, my analytical framework, what to expect in future newsletters

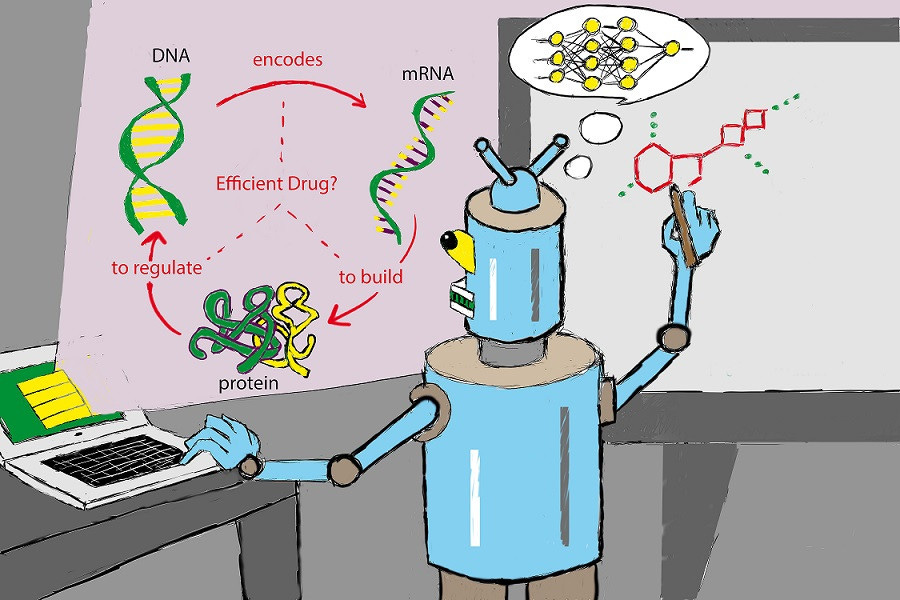

Welcome to Where Tech Meets Bio, where I review paradigm-changing discoveries in science and technology, analyze the impact of novel technologies on the pharma and biotech industries, and review leading companies, products, and technologies shaping our lives in the “century of biotech.”

Prologue

Back in 2016, I wrote my first article about artificial int…